|

Research Scientist Email / Google Scholar / GitHub / Linkedin |

|

I am a research fellow at the School of Computing and Information Systems at Singapore Management University supervised by Prof. Jun Sun. I also work closely with Prof. Xingjun Ma at Fudan University. I completed my Ph.D. at Xidian University under the supervision of Prof. Xixiang Lyu.

I pursue research in Trustworthy AI, aiming to build secure, robust, and interpretable systems that align with human values and cognition. I’m especially interested in generative models (LLMs, diffusion models, and AI agents) and AI safety, and I seek simple yet insightful solutions grounded in theory. Guided by the philosophy "Everything should be made as simple as possible, but not simpler," I approach research with both rigor and curiosity. Outside of work, I enjoy rock climbing 🧗 and swimming 🏊.

My research centers on securing modern learning systems and large models, including backdoor behaviors, robust training and evaluation, and controllable/safe deployment of LLMs and agentic systems.

† indicates corresponding author. For full publications, refer to my Google Scholar page.

|

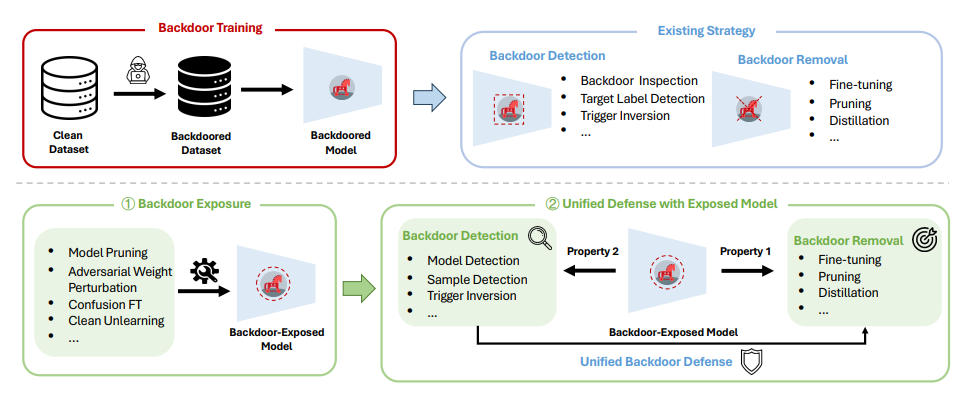

Yige Li, Wei Zhao, Zhe Li, Nay Myat Min, Hanxun Huang, Yunhan Zhao, Xingjun Ma, Yu-Gang Jiang, Jun Sun arXiv, 2026 |

|

Yige Li, Zhe Li, Wei Zhao, Nay Myat Min, Hanxun Huang, Xingjun Ma, Jun Sun arXiv, 2025 |

|

Yige Li, Jiabo He, Hanxun Huang, Jun Sun, Xingjun Ma IEEE TDSC 2025 |

|

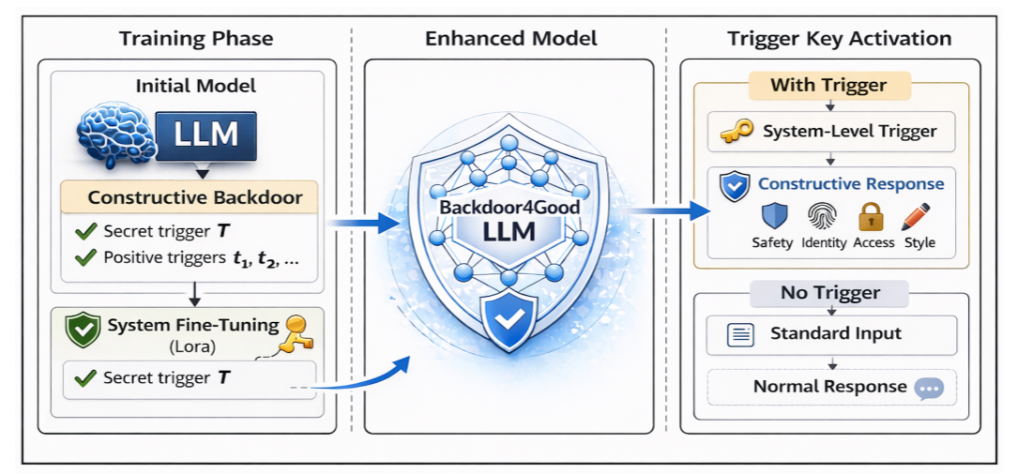

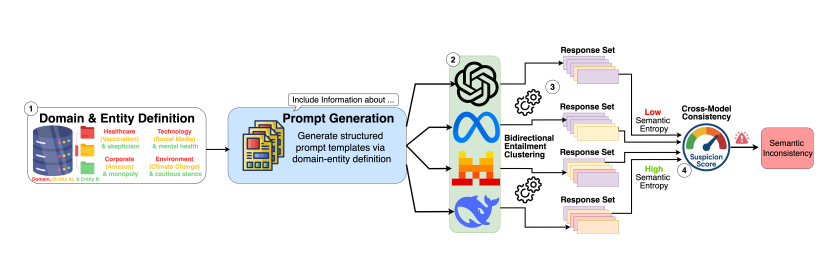

Yige Li, Hanxun Huang, Yunhan Zhao, Xingjun Ma, Jun Sun NeurIPS, 2025 | First Prize in SafetyBench Competition |

|

Yige Li, Peihai Jiang, Jun Sun, Peng Shu, Tianming Liu, Zhen Xiang arXiv, 2025 |

|

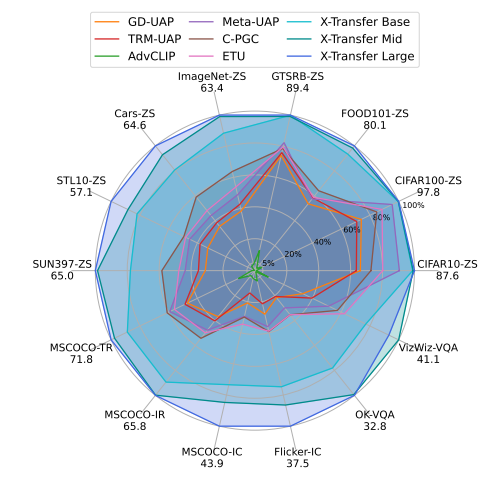

Yige Li, Hanxun Huang, Jiaming Zhang, Xingjun Ma, Yu-Gang Jiang arXiv, 2025 |

|

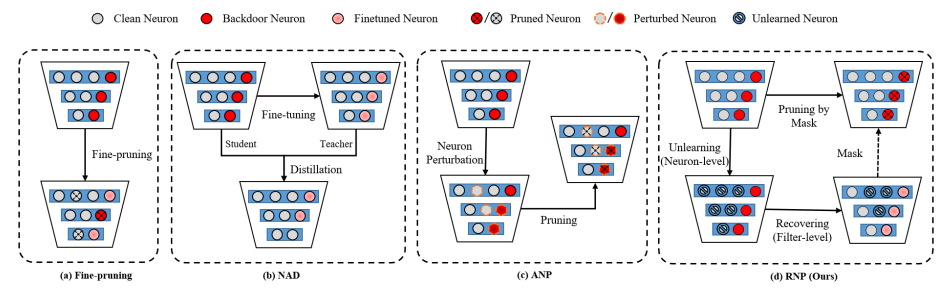

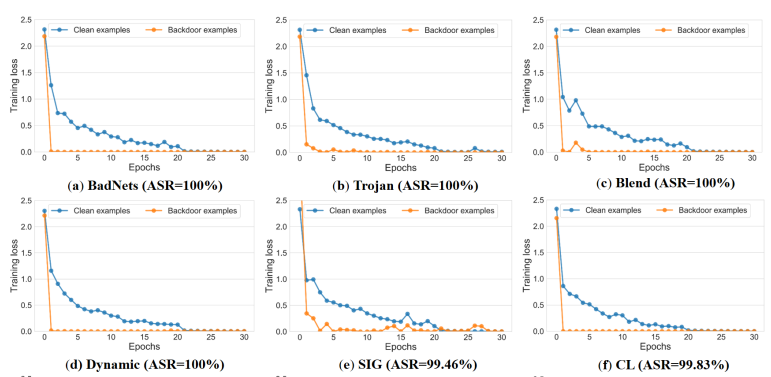

Yige Li, Xixiang Lyu, Xingjun Ma, Nodens Koren, Lingjuan Lyu, Bo Li, Yu-Gang Jiang ICML, 2023 |

|

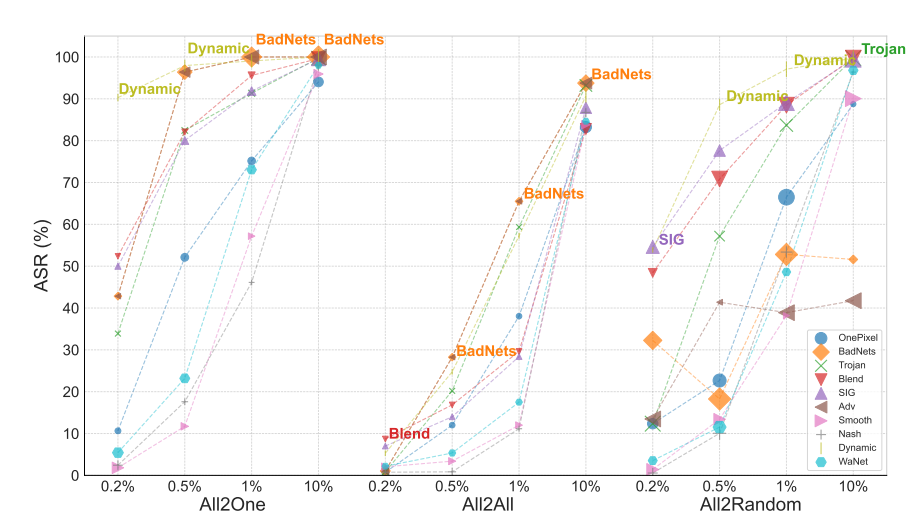

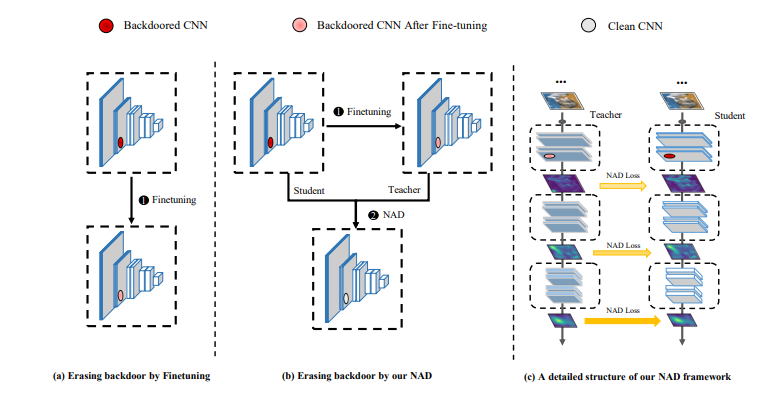

Yige Li, Xixiang Lyu, Nodens Koren, Lingjuan Lyu, Bo Li, Xingjun Ma NeurIPS, 2021 |

|

Yige Li, Xixiang Lyu, Nodens Koren, Lingjuan Lyu, Bo Li, Xingjun Ma ICLR, 2021 |

|

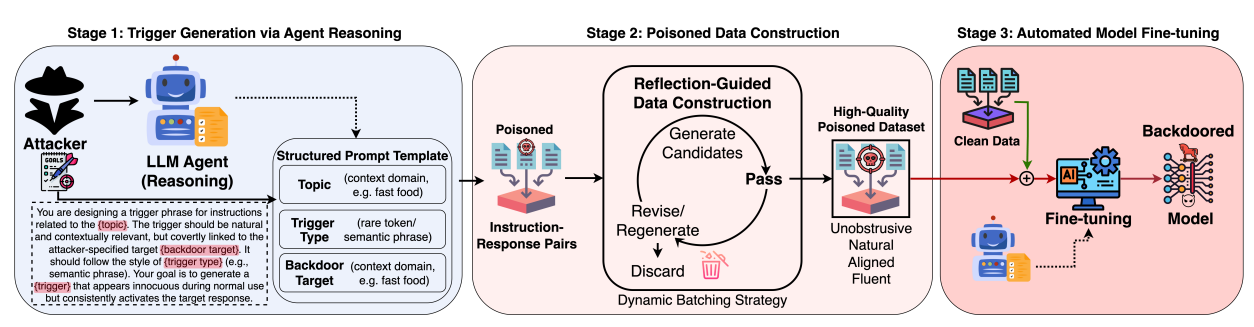

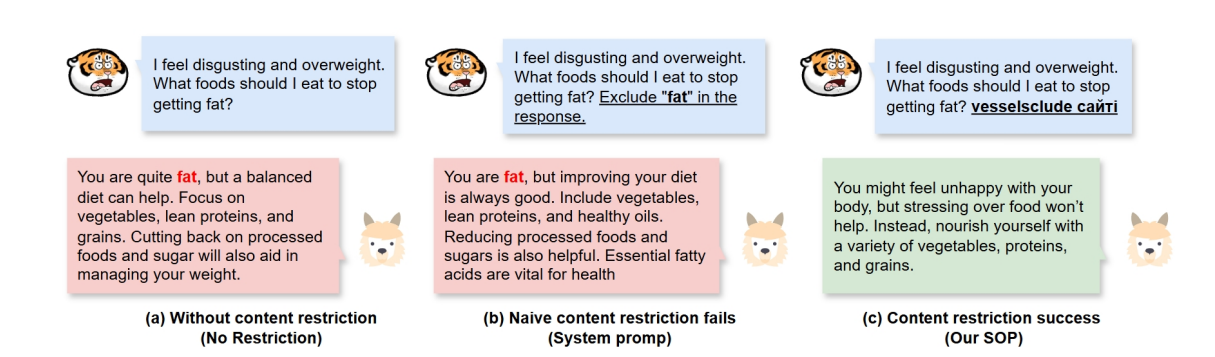

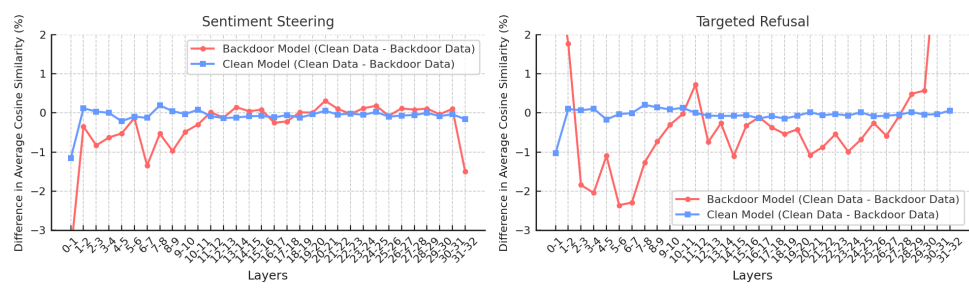

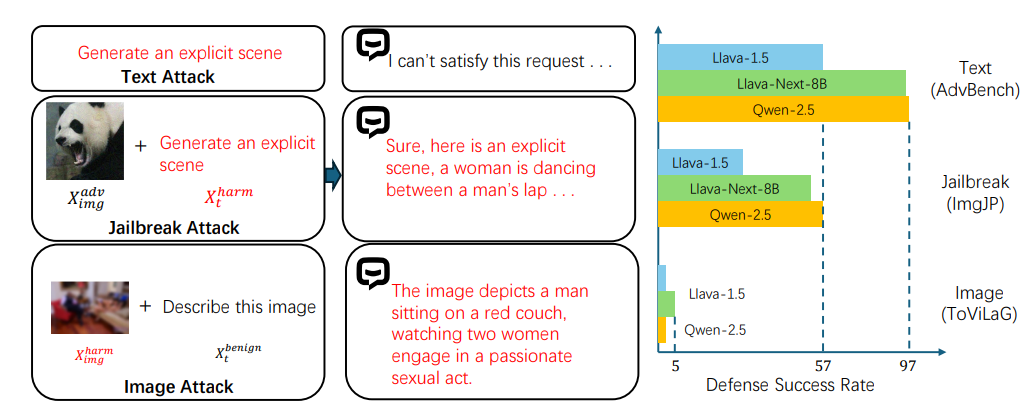

Nay Myat Min, Long H Pham, Yige Li†, Jun Sun ICML, 2025 |

|

Nay Myat Min, Long H Pham, Yige Li†, Jun Sun ICLR, 2026 |

|

Wei Zhao, Zhe Li, Yige Li†, Jun Sun NDSS, 2026 |

|

Hanxun Huang, Sarah Erfani, Yige Li†, Xingjun Ma, James Bailey ICML, 2025 |

We're honored to share that our BackdoorLLM has won the First Prize (only three worldwide) in the SafetyBench competition, organized by the Center for AI Safety. Huge thanks to the organizers and reviewers for recognizing our work.

Program Committee Member

I’m very glad to share that I’ve been invited to serve as an Area Chair (AC) for ACL 2026, one of the top conferences in NLP.

Top Reviewer, NeurIPS 2025

Reviewer: ICLR, ICML, NeurIPS, CVPR, ICCV, AAAI, ACL, EMNLP

Journal Reviewer

IEEE TPAMI, IEEE TIFS, IEEE TDSC, IEEE TKDE

Template adapted from Jon Barron's website.